Analysis of variance

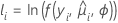

The deviance table is constructed based on the following general result which assumes that ϕ is known. If DI is the deviance associated with an initial model and DS is the deviance associated with a subset of terms in the initial model, then under some regularity conditions, the following relationship exists:

The difference between the deviances is asymptotically distributed as a chi-square distribution with d degrees of freedom. These statistics are calculated for adjusted (type III) analysis and sequential (type I) analysis. The adjusted deviance and the chi-square statistic in the deviance table are equal. The adjusted mean deviance is the adjusted deviance divided by the degrees of freedom.

For the sequential analysis, the output depends on the order that the predictors enter the model. The sequential deviance is the unique portion of the deviance that a predictor explains, given any predictors already in the model. If you have a model with three predictors, X1, X2, and X3, the sequential deviance for X3 shows how much of the remaining deviance that X3 explains given that X1 and X2 are already in the model. To obtain a different sequential deviance, repeat the regression procedure entering the predictors in a different order.

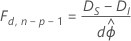

If ϕ is unknown, as for responses that follow a normal distribution, then under some regularity conditions the relationship changes to the following:

Here, the difference between the deviances is asymptotically distributed as an F distribution with d degrees of freedom for the numerator and n − p degrees of freedom for the denominator. To estimate the dispersion parameter, use the initial model.

Notation

| Term | Description |

|---|---|

| yi | the number of events for the ith row |

| the estimated mean response of the ith row |

| mi | the number of trials for the ith row |

| Lf | the log-likelihood of the full model |

| Lc | the log likelihood of the model with a subset of terms from the full model |

| d | the degrees of freedom are the difference between the numbers of parameters in the models to compare |

| ϕ | the dispersion parameter, known to be 1 for the binomial model |

| n | the number of rows in the data |

| p | the regression degrees of freedom for the initial model |

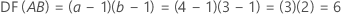

Degrees of freedom (DF)

| Source of variation | DF |

| Model | p |

| Error | n − p − 1 |

| Total | n − 1 |

| Continuous predictors | 1 |

| Categorical predictors | q − 1 |

| Blocks | b − 1 |

Note

For 2-level designs with center points, the degrees of freedom for curvature are 1.

Notation

| Term | Description |

|---|---|

| p | The sum of the degrees of freedom for the predictors. The predictors do not include the constant. |

| n | The number of rows in the design |

| q | The number of levels of the categorical predictor |

| b | The number of blocks |

| a | The number of levels in factor A |

| b | The number of levels in factor B |

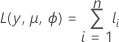

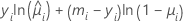

Log-likelihood

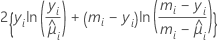

The general form of the individual contributions follows:

Notation

| Term | Description |

|---|---|

| yi | the number of events for the ith row |

| mi | the number of trials for the ith row |

| the estimated mean response of the ith row |

p-value (P)

Used in hypothesis tests to help you decide whether to reject or fail to reject a null hypothesis. The p-value is the probability of obtaining a test statistic that is at least as extreme as the actual calculated value, if the null hypothesis is true. A commonly used cut-off value for the p-value is 0.05. For example, if the calculated p-value of a test statistic is less than 0.05, you reject the null hypothesis.