In This Topic

Step 1: Evaluate the appraiser agreement visually

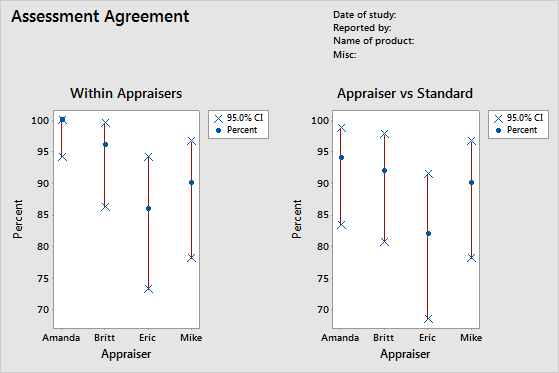

To determine the consistency of each appraiser's ratings, evaluate the Within Appraisers graph. Compare the percentage matched (blue circle) with the confidence interval for the percentage matched (red line) for each appraiser.

To determine the correctness of each appraiser's ratings, evaluate the Appraiser vs Standard graph. Compare the percentage matched (blue circle) with the confidence interval for the percentage matched (red line) for each appraiser.

Note

Minitab displays the Within Appraisers graph only when you have multiple trials.

This Within Appraisers graph indicates that Amanda has the most consistent ratings and Eric has the least consistent ratings. The Appraiser vs Standard graph indicates that Amanda has the most correct ratings and Eric has the least correct ratings.

Step 2: Assess the consistency of responses for each appraiser

To determine the consistency of each appraiser's ratings, evaluate the kappa statistics in the Within Appraisers table. When the ratings are ordinal, you should also evaluate the Kendall's coefficients of concordance. Minitab displays the Within Appraiser table when each appraiser rates an item more than once.

Use kappa statistics to assess the degree of agreement of the nominal or ordinal ratings made by multiple appraisers when the appraisers evaluate the same samples.

- When Kappa = 1, perfect agreement exists.

- When Kappa = 0, agreement is the same as would be expected by chance.

- When Kappa < 0, agreement is weaker than expected by chance; this rarely occurs.

The AIAG suggests that a kappa value of at least 0.75 indicates good agreement. However, larger kappa values, such as 0.90, are preferred.

When you have ordinal ratings, such as defect severity ratings on a scale of 1–5, Kendall's coefficients, which account for ordering, are usually more appropriate statistics to determine association than kappa alone.

Note

Remember that the Within Appraisers table indicates whether the appraisers' ratings are consistent, but not whether the ratings agree with the reference values. Consistent ratings aren't necessarily correct ratings.

Within Appraisers

Key Results: Kappa, Kendall's coefficient of concordance

Many of the kappa values are 1, which indicates perfect agreement within an appraiser between trials. Some of Eric's kappa values are close to 0.70. You might want to investigate why Eric's ratings of those samples were inconsistent. Because the data are ordinal, Minitab provides the Kendall's coefficient of concordance values. These values are all greater than 0.98, which indicates a very strong association within the appraiser ratings.

Step 3: Assess the correctness of responses for each appraiser

To determine the correctness of each appraiser's ratings, evaluate the kappa statistics in the Each Appraiser vs Standard table. When the ratings are ordinal, you should also evaluate the Kendall's correlation coefficients. Minitab displays the Each Appraiser vs Standard table when you specify a reference value for each sample.

Use kappa statistics to assess the degree of agreement of the nominal or ordinal ratings made by multiple appraisers when the appraisers evaluate the same samples.

- When Kappa = 1, perfect agreement exists.

- When Kappa = 0, agreement is the same as would be expected by chance.

- When Kappa < 0, agreement is weaker than expected by chance; this rarely occurs.

The AIAG suggests that a kappa value of at least 0.75 indicates good agreement. However, larger kappa values, such as 0.90, are preferred.

When you have ordinal ratings, such as defect severity ratings on a scale of 1–5, Kendall's coefficients, which account for ordering, are usually more appropriate statistics to determine association than kappa alone.

Each Appraiser vs Standard

Key Results: Kappa, Kendall's correlation coefficient

Most of the kappa values are larger than 0.80, which indicates good agreement between each appraiser and the standard. A few of the kappa values are close to 0.70, which indicates that you may need to investigate certain samples or certain appraisers further. Because the data are ordinal, Minitab provides the Kendall's correlation coefficients. These values range from 0.951863 and 0.975168, which indicate a strong association between the ratings and the standard values.

Step 4: Assess the consistency of responses between appraisers

To determine the consistency between the appraiser's ratings, evaluate the kappa statistics in the Between Appraisers table. When the ratings are ordinal, you should also evaluate the Kendall's coefficient of concordance.

Use kappa statistics to assess the degree of agreement of the nominal or ordinal ratings made by multiple appraisers when the appraisers evaluate the same samples.

- When Kappa = 1, perfect agreement exists.

- When Kappa = 0, agreement is the same as would be expected by chance.

- When Kappa < 0, agreement is weaker than expected by chance; this rarely occurs.

The AIAG suggests that a kappa value of at least 0.75 indicates good agreement. However, larger kappa values, such as 0.90, are preferred.

When you have ordinal ratings, such as defect severity ratings on a scale of 1–5, Kendall's coefficients, which account for ordering, are usually more appropriate statistics to determine association than kappa alone.

Note

Remember that the Between Appraisers table indicates whether the appraisers' ratings are consistent, but not whether the ratings agree with the reference values. Consistent ratings aren't necessarily correct ratings.

Between Appraisers

Key Results: Kappa, Kendall's coefficient of concordance

All the kappa values are larger than 0.77, which indicates minimally acceptable agreement between appraisers. The appraisers have the most agreement for samples 1 and 5, and the least agreement for sample 3. Because the data are ordinal, Minitab provides the Kendall's coefficient of concordance (0.976681), which indicates a very strong association between the appraiser ratings.

Step 5: Assess the correctness of responses for all appraisers

To determine the correctness of all the appraiser's ratings, evaluate the kappa statistics in the All Appraisers vs Standard table. When the ratings are ordinal, you should also evaluate the Kendall's coefficients of concordance.

Use kappa statistics to assess the degree of agreement of the nominal or ordinal ratings made by multiple appraisers when the appraisers evaluate the same samples.

- When Kappa = 1, perfect agreement exists.

- When Kappa = 0, agreement is the same as would be expected by chance.

- When Kappa < 0, agreement is weaker than expected by chance; this rarely occurs.

The AIAG suggests that a kappa value of at least 0.75 indicates good agreement. However, larger kappa values, such as 0.90, are preferred.

When you have ordinal ratings, such as defect severity ratings on a scale of 1–5, Kendall's coefficients, which account for ordering, are usually more appropriate statistics to determine association than kappa alone.

All Appraisers vs Standard

Key Results: Kappa, Kendall's coefficient of concordance

These results show that all the appraisers correctly matched the standard ratings on 37 of the 50 samples. The overall kappa value is 0.912082, which indicates strong agreement with the standard values. Because the data are ordinal, Minitab provides the Kendall's coefficient of concordance (0.965563), which indicates a strong association between the ratings and the standard values.